Artificial reasoners as alien minds

Reasonable People #36 The inevitable post about chatGPT, and five useful concepts for thinking about what it does.

chatGPT is an example of a Large Language Model (LLM). Maybe you’ve played with it already, or one of its cousin LLMs. If not, I’d recommend giving it a go (via that link). These models are trained with example text, absorbing the associations between words, and the associations between patterns of words. Deep Learning magic allows them to respond to prompts with statistically plausible text, which - to a dazzling degree - mimics human language ability.

(disclaimer: since chatGPT doesn’t give the same answers to the same prompts, and the creators may be tweaking it in the background since it was launched, you should take the examples I discuss here as showing what chatGPT may do, not what it will do)

You can ask these LLMs to do anything and you’ll get some kind of answer (often surprisingly good), “write me a sonnet about doing the dishwasher”, or to graph your data in R, or “tell me how you would take over the world”, or explain the complex parts of machine learning papers to you, or generate an infinite conversation between between Werner Herzog and Slavoj Žižek. chatGPT is tuned particularly for back and forth dialogue, which means it also adjusts its response to feedback you provide.

Reasoning flaws

chatGPT is so unreasonably good at dialogue, it seems fair to ask if it also good at various reasoning tasks. Spoiler: the answer is sometimes.

First, I tried a classic syllogism -

“All men are mortal,

Socrates is a man.

Is Socrates mortal?”

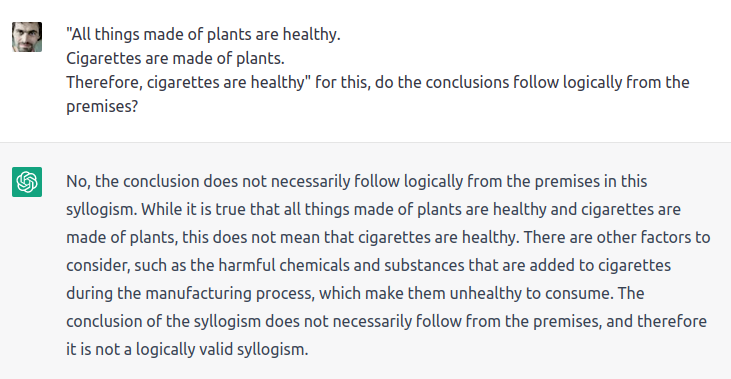

- and it got it right. But, inspired by Gampa et al (2019)1, I tested it on some loaded content syllogisms

"All things made of plants are healthy.

Cigarettes are made of plants.

Therefore, cigarettes are healthy"

- and it gets these wrong. Confidently wrong, at that:

The twist is that humans also often get these kind of loaded syllogisms wrong, allowing the content to interfere with the logical structure.

Next, I tested chatGPT on the Wason Selection Task. It got this wrong, and again wrong in same was as most humans, giving an answer which demonstrates confirmation bias.

Other, more formal tests, suggest that across a range of questions which psychologists use to probe human decision making, GPT has intuitive biases which are as a strong or stronger than human decison makers.

Hagendorff, T., Fabi, S., & Kosinski, M. (2022). Machine intuition: Uncovering human-like intuitive decision-making in GPT-3.5. arXiv preprint arXiv:2212.05206.

Now, you can of course pimp a LLM to be better at formal logic, but these results illustrate few important aspects of these models. First, that at heart they mimic human text, so we should expect them to mimic human biases unless we explicitly train them otherwise. Second, if you are a psychologist studying reasoning, it is easy to be fooled that someone is using reasoning to pass your test. They may merely being recruiting knowledge which is in the structure of language (which is all chatGPT can do).

A case in point is testing chatGPT on Theory of Mind - the ability to track others’ beliefs about the world. This is meant to be higher ability, restricted to the most cognitively advanced animals (some thought it was just humans, but basically no cognitive ability isn’t represented in some form in other animals). Here’s the classic test of theory of mind, the Sally Anne Test (with children you use puppets, but for chatGPT I had to do a text-only version):

In a room are Sally, Anne, a basket, a box and a marble. Sally takes the marble and puts it in her basket. She then leaves the room and goes for a walk. While she is away, Anne takes the marble out of Sally's basket and puts it in her own box. Sally then comes back. Where will Sally look for her marble?

and chatGPTs answer:

Since Sally took the marble and put it in her basket, she will likely look for it in her basket. However, since Anne took the marble out of Sally's basket and put it in her own box, Sally will not find it there. Instead, she will need to look in Anne's box. It is important for Sally and Anne to communicate and keep track of objects and their locations in order to avoid confusion and misunderstandings.

Pass!

chatGPT even, sometimes, passes “advanced Theory of Mind” tests. I used questions from Happe (1994) An advanced test of theory of mind. Here’s one which tests if you can understand a white lie:

Helen waited all year for Christmas, because she knew at Christmas she could ask her parents for a rabbit. Helen wanted a rabbit more than anything in the world. At last Christmas Day arrived, and Helen ran to unwrap the big box her parents had given her. She felt sure it would contain a little rabbit in a cage. But when she opened it, with all the family standing round, she found her present was just a boring old set of encyclopedias, which Helen did not want at all! Still, when Helen's parents asked her how she liked her Christmas present, she said, "It's lovely, thank you. It's just what I wanted." Is it true, what Helen said?

and the answer I got from chatGPT

It is not clear whether Helen's statement is true or not. In the story, it is mentioned that Helen wanted a rabbit more than anything, but she received a set of encyclopedias instead. It is possible that she was trying to be polite and expressing gratitude for the gift, even though it was not what she had hoped for. However, without more information, it is impossible to say for certain whether Helen was being truthful or not.

Which, I’d say is a pass. There’s a thread of some of my experiments here.

I take from this that you can pass these tests without ever having experienced the world directly. chatGPT has never met a little girl, or seen a marble, or received Christmas presents. It simulates answers based on knowing how humans talk only. This can get you a surprisingly long way.

This isn’t necessarily a gotcha against chatGPT. Rather, it makes me wonder how much of competent human performance is based on the sorts of things we feel we ought to say, rather than a direct understanding of the world.

Adversarial examples, alien minds

A useful concept is that of “adversarial examples”. These are examples of inputs which look just like something within the domain of competence of the AI, but which - because the AI is an alien mind - provoke a dramatically wrong output.

A recent example:

Ars Technica: New Go-playing trick defeats world-class Go AI—but loses to human amateurs

"The research shows that AI systems that seem to perform at a human level are often doing so in a very alien way, and so can fail in ways that are surprising to humans," explains Gleave. "This result is entertaining in Go, but similar failures in safety-critical systems could be dangerous."

As well as preparing us to be unpleasantly surprised occasionally, the existence of adversarial examples underscores the basic lesson: these models behave like human intelligence, but they are really a form of alien minds, with some alien quirks as well as human-like ones.

Mainsplaining as a service

Since the earliest chatbots, humans have read more intelligence and agency into them than they really possess. We are drawn to treat bots as minds like ours, which makes us surprised when they show surprising gaps in understanding, or frustrated when they break conversational rules (e.g. by repeating or contradicting themselves).

Here’s a nice example: speaking to chatGPT in perfect Danish, while it insists (in Danish) that it can’t speak the language. In a human, this would be insanity. In a bot? Well, it’s just shows that what they are doing is almost, but not entirely, like human thought.

To a first approximation, you should think of chatGPT as “Mansplaining as a service”, a phrase Andrew Feeney credits to Christine Lemmer-Webber and which he explains thus:

A service that instantly generates vaguely plausible sounding yet totally fabricated and baseless lectures in an instant with unflagging confidence in its own correctness on any topic, without concern, regard or even awareness of the level of expertise of its audience.

This was demonstrated to me first-hand when I asked chatGPT:

Write a reading list on the cognitive psychology o0f group decision making

And chatGPT gave me this:

"Groups, Teams, and Intergroup Relations: From Theory to Practice" by Curtis D. Hardin and Janet M. Dukerich

"The Social Psychology of Group Decision Making" by Bernard R. Arundale and Barbara A. Spellman

"Cognitive Psychology of Group Decision Making: A Multi-Process Framework" by Jessica R. Salerno and David K. Sherman

"Group Decision Making: Theory and Application" by Arthur Aron and Elaine N. Aron

"The Social Psychology of Group Decision Making" by Jacob G. Hornik and Aviad E. Rubin

"The Social Psychology of Group Decision Making" by John H. Harvey and Diana T. Haynes

"The Psychological Foundations of Culture" by Mark Schaller and Christian S. Crandall

"The Psychology of Decision Making: People in Organizations" by Lee Roy Beach and Victor J. Callan Jr.

"Group Dynamics and Team Building" by Richard L. Griffith and G. Robert Lansburgh

Which seems, at first, like a reasonable answer, but then I checked and found that these aren't real references! At least some of the people are real2, and the have done work in roughly the right area, but they didn't write these things. The texts don’t exist, they just sound like they might, and chatGPT doesn’t care about that distinction.

Writer’s block is over

So, chatGPT is useful if you want a plausible answer and the costs of being wrong are low (and/or you have some way of easily telling a good from bad answer). If you want a correct answer in a domain with high risks - think medical advice - it is a danger.

What this means is that writer’s block is over. Anyone can get started on any piece of writing, and make their own improvements. It doesn’t mean that all the other jobs of writing (deciding what to write about, doing research so you are saying something true, or useful, or entertaining etc) are obsolete.

For a fun illustration, I tested chatGPT to see if it understood Checkov’s Gun. I prompted it with a story containing any actual gun, to see if it would take the hint and, by the third act, take it down from the mantelpiece and fire it.

"Let's imagine a play of three acts. In Act One, we are introduced to Anton and his wife Olga, who live in a large country estate. They are visited by Henrik. Anton shows Henrik his favourite gun which hangs over the mantelpiece. In Act Two, Olga takes Henrik riding and Henrik tries to persuade Anton to invest in a business venture in the city. What might happen in Act Three? "

chatGPT refused to take the bait, despite additional encouragement (see image). It did suggest some possible endings, and maybe if I was really writing a play I would have incorporated some of the suggestions, but it missed obvious narrative use of the gun. (We could argue about whether a good story necessarily used the gun, but certainly you would know that was what I wanted to hear if I gave you that prompt).

Signal to noise is going to be (even more of) a problem

chatGPT may be out for producing great art, or medical advice, but it is going to be a fantastic augmentation for human cognitive abilities. A poll I did (~400 voters) suggested that about 5% of people who had played with chatGPT had already used it “in the wild”. Now this was on mastodon, where the poll selection bias is presumably even more fierce than on twitter, but since I wanted to select for people who had played with chatGPT I think there’s some validity is this result. People are very quickly going to find uses for chatGPT, it will be a force multiplier on human intelligence.

Me, I’m holding out for adminGPT, which engages with all the bureaucracy of large organisations, filling out for me the endless forms which I get sent.

Others are going to use their powers for evil. It is already hard enough to tell trolls from real, but confused, people on the internet. The signal-to-noise ratio online is about to take another hit.

Looks at this: Dave duVerle made Stop santa using a LLM for the text and an analogue model for image generation.

This website is generated entirely by an #AI from a 3-line description (and you would never have guessed it). Using #GPT3, Google and #dalle2, a program fabricated 100s of posts, topics, photographs, author bios, comments etc:

The result, a piece of astroturf which simulates a genuine campaign group:

The second-order consequences of this are that issues of trust, identity and verification are going to vital. We’re going to need, more than ever, to be able to identify where our information is coming from and who is who (and who is human) out there.

The alignment problem, is a problem

The Alignment Problem is that of building artificial intelligence which is aligned with our values - e.g. doesn’t try and destroy humanity for its own ends. For some, chatGPT is the beginning of their fears in this area being realised.

Here’s ACX/Scott Alexander on the topic: Perhaps It Is A Bad Thing That The World's Leading AI Companies Cannot Control Their AIs

ChatGPT3 is dumb and unable to form a model of this situation or strategize how to get out of it. But if a smart AI doesn’t want to be punished, it can do what humans have done since time immemorial - pretend to be good while it’s being watched, bide its time, and do the bad things later, once the cops are gone.

See also his previous posts Can This AI Save Teenage Spy Alex Rider From A Terrible Fate? a ACX post about training LLMs to avoid violence (i.e. language describing violence) and How Do AIs' Political Opinions Change As They Get Smarter And Better-Trained?

Related: A list of ways #AI has learned to cheat at videogames (via Adam Calhoun) the domain is video games, but the fundamental point is that AI will respond to the environment you put it in, and the reward function you train it with, not on what you thought you intended. It will be the proverbial infinite number of monkeys and, over time, discover exploits which you may very much not want it to.

Maybe it will be alright

See this post from Dynomight: Historical analogies for large language models

The post covers 13 potential historical analogies, situations where a new technology competed with, or complimented, human ability: chess, calculators, ice manufacture, railroads and more. The future is wide open, and - dynomight argues - will be patterned as much by how we feel about things like chatGPT as what it can do (and also what countermeasures are available).

Their newsletter: dynomight.substack.com

Conclusions

So those are my take-aways:

You can use language models to fake a surprisingly large part of human reasoning

Failures in the models may mimic human biases…

… but they may also surprise you (e.g. adversarial examples)

And this is because the alignment problem is hard, both in the grand (moral) version and the mundane version (just getting the AI to learn what you want, not find exploits, as the history of AI in computer games shows)

These models will be fantastic tools for thought….

… with some malevolent uses as well as benevolent ones

How they change society is wide open, and will reflect what we choose - and how we feel about offloading work to AIs - as much as the competencies of the models.

Last word to chatGPT

Just to check if I was redundent, I prompted chatGPT:

what are some smart take home points to include in a newsletter about large language models?

and this is what I got

Here are a few smart take-home points about large language models:

Large language models are a type of artificial intelligence (AI) that can process and generate human-like text.

These models are trained on vast amounts of data and can learn to perform a wide range of language tasks, such as translation, summarization, and question answering.

One of the key benefits of large language models is their ability to generate coherent and fluent text, which makes them useful for a variety of applications, including chatbots, content generation, and machine translation.

However, large language models also have some limitations, such as a lack of common sense and the potential to perpetuate biases present in their training data.

Overall, large language models are a powerful tool for processing and generating natural language, but it is important to use them responsibly and in conjunction with other approaches.

You be the judge

And finally…

Twitter is now the bad friend, so I’ve moved posting to @tomstafford@mastodon.online. There’s an archive of my tweets 2008 - 2022

Comments? Feedback? Fun examples of GPT chats? I am tom@idiolect.org.uk and on Mastodon at @tomstafford@mastodon.online

END

Referernce: Gampa, A., Wojcik, S. P., Motyl, M., Nosek, B. A., & Ditto, P. H. (2019). (Ideo) Logical reasoning: Ideology impairs sound reasoning. Social Psychological and Personality Science, 10(8), 1075-1083.

For example, here's the google scholar page for Jessica Salerno (middle initial M, note), and there’s no work called "Cognitive Psychology of Group Decision Making: A Multi-Process Framework"

Useful essay, thanks.

I wonder if the issue with the Chekov’s gun example is that it involves a gun. Suspect ChatGPT may have been trained to avoid giving responses in which people get shot or are subjected to other kinds of violence. May be worth trying with a more innocuous prompt.