Reasons to trust models

Reasonable People #10 How to make computational models better public objects

What are models

Models have been at the heart of the response to coronavirus - what they show, what they assume, where they come from.

In the UK, the team at Imperial published their modelling work on March 16th. At 20 pages, this report is a paradigmatic example of clearly explained science. It supported a dramatic change in government policy: only drastic action to suppress the virus would stop ICU capacity being overwhelmed.

There were reports that the change in policy was driven by a new model, but the truth is that it was estimations from Italy of the proportion of coronavirus cases which required hospitalisation - new data, but fed into the old models - which generated the dire predictions.

A month into our model-driven lockdown there was growing dissatisfaction with the governments consumption and deployment of scientific advice. See this Guardian article from 27th of April ‘growing frustration over the UK government’s claim that it is “following the science”, saying the refrain is being used to abdicate responsibility for political decisions. As well as these complaints about Government’s use of science to avoid of value based choices, there were complaints about the lack of transparency around the scientific advice, and an over-reliance on models, and on a particular kind of model-maker.

Indicators of Trust

Models will continue to be critical to the coronavirus response, and will continue to be both political as well as scientific objects. So I was thinking about exactly what kind objects models are, and when they support good argument, and when they are merely for show.

Models are deduction machines. We define a mathematical structure based on our assumptions, turn the handle (i.e. ask the computer to crunch the numbers) and the result is inevitable. In the sense that models produce tautologies, they can’t be right or wrong, just relevant or irrelevant. You criticise a model by criticising its assumptions, or by criticising its interpretation.

At this most general level, it is clear that trust in models is supported by a clear audit trail - the major assumptions should be clearly stated (in English), the code should be annotated and available, the range of results from the model available (or, even better, the ability to run it yourself).

Not like this preprint on the influence of having had a BCG vaccination on your coronavirus risk, which surely deserves a shoutout for most inadequate description of how the results were obtained: "Data were analyzed using Matlab scripts.”.

Seriously. That’s it.

Model Flavours

Models also come in different flavours according to their complexity. You can try and fit the data directly (for disease spead something like “assume it is an exponential increase, how many cases will there be in 10 days?”). Or you can add a level of complexity by defining some plausible mechanisms behind the outputs you are measuring (as with the Imperial report model which requires assumptions about the number of people infected by each case, length of incubation period, etc). And you can push further, adding in additional details about, say the evolution of strains of the virus, or the transport links between metropolitan areas, or the movements of individuals. One flavour of these more complex models which gives each person a place, and a health status and behaviour is the so called agent based models.

With modelling there is no limit to the amount of complexity you can add by incorporating additional details. Adding such details can always be justified in the service of ‘realism’, but it doesn’t always add explanatory leverage.

Each field has its own experience of models, and each modeller their own preference for the level of complexity which suits their scientific purposes and tastes. With coronavirus these issues about model choice and model justification become public and critical. I’ve been thinking about what we can learn from the experience with modelling of other fields.

Climate Science is heavily tied up with models and model predictions. Here’s one climate scientist’s take on coronavirus modelling (James Annan), which says epidemiologists missed a trick in not validating their model, by failing to use incoming data to calibrate uncertainty around the key reproductive rate parameter (“what on earth are mathematical epidemiologists actually for, if not this sort of thing?”).

IANAE, but it would be interesting to hear more from climate scientists about why this, and other practices, are key to model promulgation in their area (and from epidemiologists about whether they are, or should be, in theirs).

Neuroscience has its own history of modelling and model controversy. This paper (found via Cian O'Donnell) makes a nice distinction:

Is a more detailed model necessarily more realistic? Consider two models of a plane: a toy plane made of wood and a simple paper plane. The first model is more detailed, and it has different recognizable elements of a plane: wings, helixes, a cockpit. The second model is more abstract, but it has an important characteristic that the first model does not have: it can fly. From a scientific point of view, the first model is detailed but not realistic, since it cannot fly. Level of detail and scientific realism are two different notions.

I love the example because it makes clear that utility isn’t always served by greater realism. Sure, if you want to learn the names of parts of plane the wooden model is better. But if you want to demonstrate flight the paper plane is the better model (as well as being massively simpler to make).

Explainability is a model virtue

The virtues of simplicity are emphasised in coronavirus models, which have to convince scientists and policymakers, but also require some public currency. This value as public currency depends on their accuracy, like all science, but also on their “explainability”.

Explainability is more than just the transparency, in the sense of the ‘the audit trail’ I mentioned above, and something more than the accepted practices of model justification, like the calibration Annan says is critical in climate science. Explainability is something about how easily people’s intuitions cleave to the details of the model.

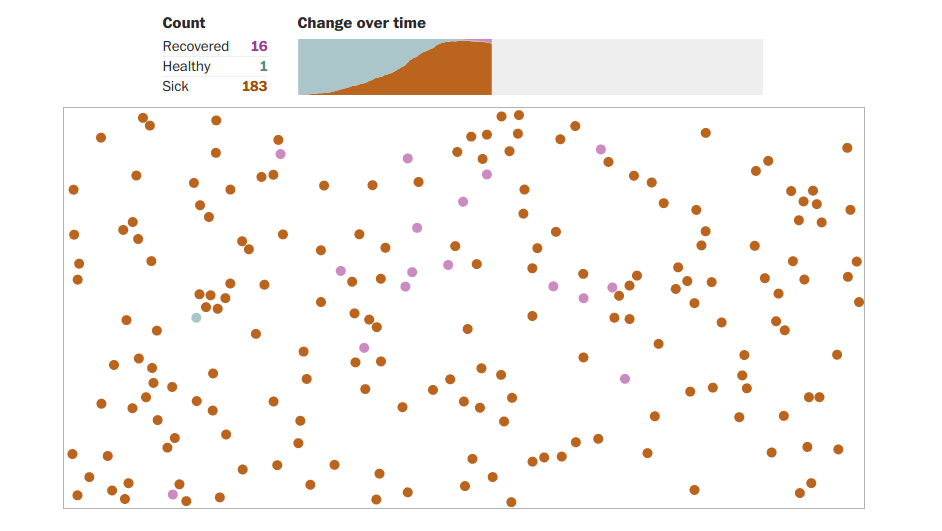

Here, some of the potentially most complex, agent-based,models can have an advantage. We all have existing intuitions for individuals moving in space, and transitioning between different states of health or disease. (Compare this to something like the R parameter, in a notionally less complex model, for which we have immediate intutions).

Here is Harry Steven’s masterful Why outbreaks like coronavirus spread exponentially, and how to “flatten the curve”. This, the most read article in the history of Washington Post, uses a simple visual model (running in the browser!) to illustrate how the dynamics of reduced social contact affect population level infection rates.

You can also shift people’s intuitions. This explorable - What Happens Next? COVID-19 Futures - from Nicky Case and Marcel Salathé uses “playful simulations” to explain standard epidemiological models, and what they say about coronavirus exit strategies.

Explorables like these two help communicate a model’s value, and are a vital part of scientific modelling. While models are developed and validated within a narrow epistemic community this work of explanation can be skimped on, but not when models to move into the wider world, and play a public role.

This is the work

Models are a prosthesis for thinking, enhancing our perception beyond the horizon of individual reason and intuition. To maximise their value, though, the conclusions they support have to be communicable. “The model says X” works from a position of authority (deserved or not), but long term trust needs to be supported by arguments of the form “The model says X because Y”, or “Because Y, the model says X”.

If I was king of the world, I would

Only allow publication of model results alongside a full, annotated, reproducible code base (this is more and more common, but not universal practice)

Make explanation of models as important as development of models (the Imperial report demonstrates one kind of clarity, explainables like Nicky Case’s showcase a next level on top of that. Counter-examples are too common to mention - explanation is its own work, and very hard).

Survey modelling communities from different fields: what has emerged as best practice in model development, and why? How does this differ from what goes on in epidemiology? Disciplines which have particular exposure to public debate - like climate science - would be where I’d look first.

Survey public health interventions which have been affected by models. Why were certain models influential? Why some picked up, others ignored? What features do models have to make them communicable beyond the subdiscipline which initially judges them as successful or not?

The result would be a best practice guide for scientific modelling when model conclusions need to be rapidly communicated and argued over in public.

Thanks to W and K for discussion of trust in the age of covid which prompted this reverie.

Cal Newport in Wired: 'Expert Twitter' Only Goes So Far. Bring Back Blogs

Coins a nice term for how some specialists have received huge increase in followers on twitter during the pandemic: “distributed expertise triage”, and argues that twitter - for all its strengths - is inadequate to epistemically support rapid intellectual response

Twitter was optimized for links and short musings. It’s not well suited for complex discussions or nuanced analyses. As a result, the feeds of these newly emerged pandemic experts are often a messy jumble of re-ups, unrolled threads, and screenshot excerpts of articles. We can do better.

We’ve heard a lot about supply chains for medical equipment, blogs are an essential part of the supply chain for ideas. I’m a huge fan of the earlier days of blogging culture (Call back to RP#8 on Thinking In Public!), but I don’t think the problem is, as Newport seems to suggest, a lack of platforms to support blogging. Something has changed in the attention economy - twitter provides high engagement, and a certain kind of scholar is rewarded by that, but not all (thank goodness). Papers provide depth and are an activity actually rewarded by academic institutions. Blogs fall in between, so you really have to have perverse motivations to invest in writing one.

Paper: Climates of suspicion:‘chemtrail’ conspiracy narratives and the international politics of geoengineering.

Conspiracy theories provide a dark mirror to my optimist’s take on human reasoning. Part of why I find them so interesting is that they are so hard to define. Quassim Cassam makes the point (either in Vices Of The Mind or in this Why We Argue podcast, apologies can’t recall which) that conspiracy theories aren’t defined by theories about a conspiracy. A frequent comment which reveals this is that theories about conspiracies can turn out to be true. Yes, but that doesn’t make them Conspiracy Theories. Conspiracy Theories (capital C, capital T) need more than a theory about a conspiracy, they need to set a minority against the orthodox account, positioning the orthodox account as powerful, malevolent and global.

To see this, consider the 9/11 terrorist attacks. Here was a conspiracy by a secretive terrorist organisation (al-Qaeda) but believing this does not make you a conspiracy theorist. You actually only become a conspiracy theorist when you reject the theory that the attacks were due to a conspiracy by al-Qaeda - the orthodox account - and endorse another account.

In the domain of chemtrails/geoengineering, Cairns, points to “the instability of the distinction between ‘paranoid’ and ‘normal’ views”, and then goes on to analyse chemtrail theories as discourse.

She argues that fact checking and deficit-filling science communication is inherently limited because:

“the strategy of ‘debunking’ arguably misses the point that such beliefs reflect not so much a lack of science as a lack of trust”

And the paper has this amazing quote from a public event where a chemtrail believer kindly took the floor to address a professor of geoengineering in a way that provides perfect illustration of this point:

But it's a question of trust isn't it? […] we see what's going on at the moment, which is definitely not benign, and so therefore we have no trust in you. And we believe that what you're trying to do is an exercise in subterfuge. You are trying to soften the blow so that when you have to admit what's going on, we will then go, oh great okay, no worries, we know you did it to protect us. We don't believe the story. So it's a question of trust.

Elsewhere I have written about our evidence that trust is as important as expertise in communicating scientific risks, and this qualitative account adds meat to that bone.

The paper came to me via @WarrenPearce

Reference:

Cairns, R. (2016). Climates of suspicion:‘chemtrail’conspiracy narratives and the international politics of geoengineering. The Geographical Journal, 182(1), 70-84.

Bonus gag from Vaughan Bell:

How many conspiracy theorists does it take to change a light bulb? The light bulb didn’t change man, that’s WHAT THEY WANT YOU TO THINK!

Aeon: The smart move: we learn more by trusting than by not trusting by Hugo Mercier (Nov, 2019).

All actions yield two things - the specific outcome, and information. The value of these two qualities can diverge. If you drink bleach believing it has health-giving properties then the outcome itself is very negative, but the information is positive - you gain useful knowledge which corrects your previous misunderstanding (although admittedly this information gain would have been better arriving before you drink bleach). There is an asymmetry here - we learn from actions we take, and have to make assumptions about the ones we avoid (which is one of the reasons mistaken estimations of dangers persist longer than mistaken estimation of opportunities).

Mercier uses this insight to argue that we, in general, under trust. Journalism is usually accurate, people are generally honest. Participants in experimental economic games in which people can cooperate for rewards lose out because they don’t trust the other participants as much as they should (as much as would be warranted by the general level of unforced cooperation).

The conclusion that we should trust more has two props: first that our trust is mis-calibrated to reality, second that we learn more from trusting than we do from not-trusting (and hence not acting in ways that assume cooperation, instead falling back on habit or isolation). The two relate, because miscalibration can be corrected by better information, but that is the thing forgone by avoiding trusting actions.

Supporting this, Mercier cites evidence from an experimental game that people highest in trust were also best able to predict who would act trustworthy with them (after having the chance to interact with other participants). People who consume more news, have higher trust in journalists, and people who know more science, trust scientists more. These correlations could work the obvious way around (of course people who trust news more are more likely to read it), but there is also the possibility of the “information gain” account: people who trust more have learnt more about reliability in that domain.

A problem for a blanket “we should trust more” rule is another asymmetry: the asymmetry of costs. Trusting that that bridge will hold might allow you a shorter journey 9 times, but if you plunge to your death in the chasm on journey 10 it was hardly worth it. Because the costs of mistaken trust vary so widely between domains, the moral I take is not that we should trust more, but that we should work hard to work out domains were a general skepticism is sensible (the safety of bridges, financial opportunities, new medicines), and distinguish them from domains where risks are low or merely psychological (games, entertainment, skill development). In those domains we might be over-applying a low-trust rule, and have lots to gain by reckless exploration which yields lots of information very quickly, with the only cost failures that annoy but don’t harm us.

Preprint: The Lure of Counterfactual Curiosity: People Incur a Cost to Experience Regret

In a set of experiments presented in this paper, we address the motivational lure of counterfactual curiosity by exploring people’s willingness to seek information about what they could have won in a modified Balloon Analogue Risk Task... people were willing to seek information about how much they could have won, at a cost, and even though it had little utility and a negative emotional impact (i.e. it led to regret).

FitzGibbon, L., Komiya, A., & Murayama, K. (2019, September 18). The Lure of Counterfactual Curiosity: People Incur a Cost to Experience Regret. https://doi.org/10.31219/osf.io/jm3u

Zeynep Tufeki: Don’t Believe the COVID-19 Models

That’s not what they’re for.

The most important function of epidemiological models is as a simulation, a way to see our potential futures ahead of time, and how that interacts with the choices we make today. With COVID-19 models, we have one simple, urgent goal: to ignore all the optimistic branches and that thick trunk in the middle representing the most likely outcomes. Instead, we need to focus on the branches representing the worst outcomes, and prune them with all our might.

…

Sometimes, when we succeed in chopping off the end of the pessimistic tail, it looks like we overreacted. A near miss can make a model look false. But that’s not always what happened. It just means we won. And that’s why we model.

…And finally

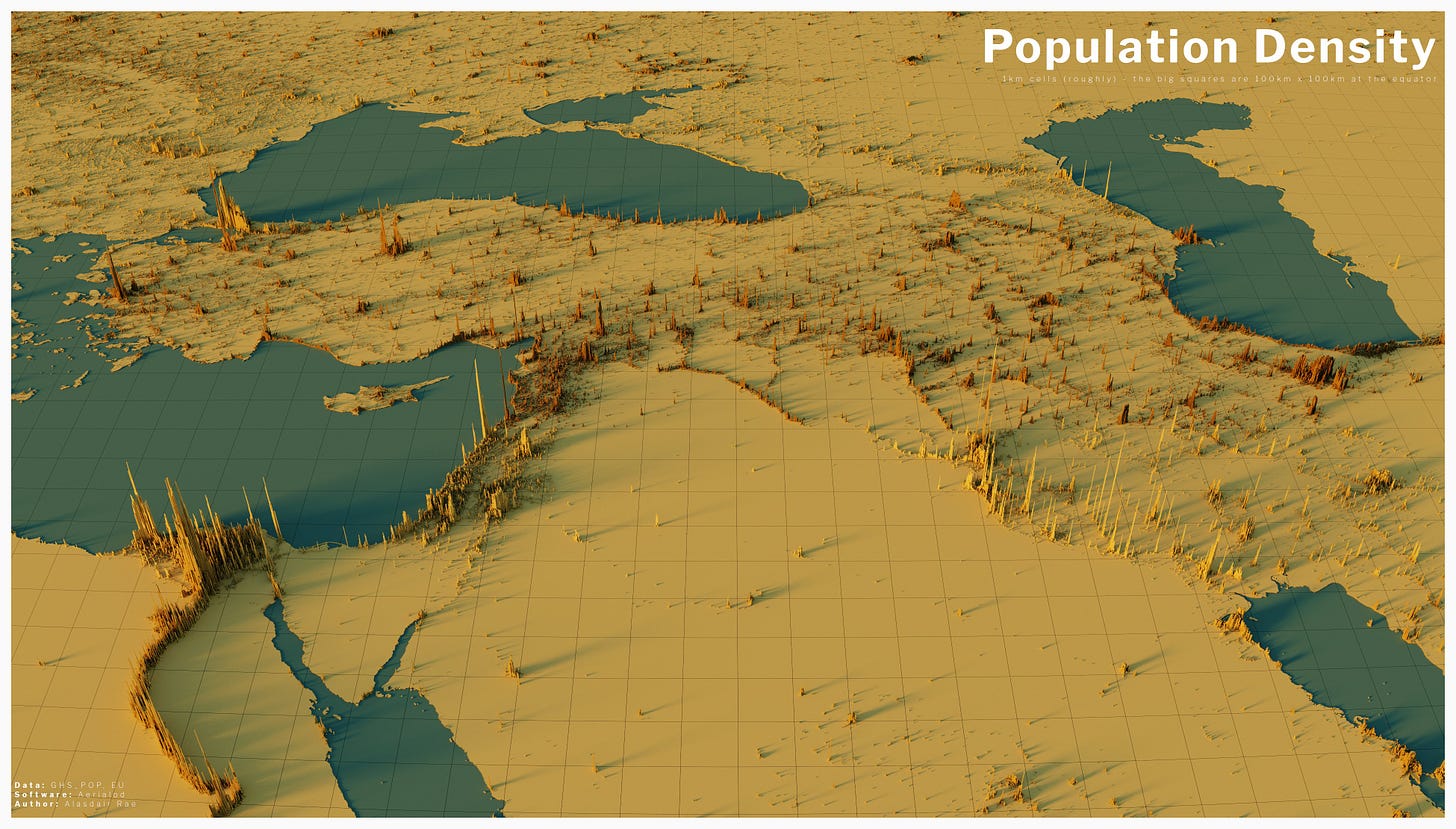

There’s something haunting about these borderless maps by Alasdair Rae, which show projections rendered as if population density was terrain:

here's a view that includes Cairo, Istanbul, Tbilisi, Baku, Tehran, Baghdad and lots of other interesting places.

Link: twitter megathread of different population density renders by @undertheraedar